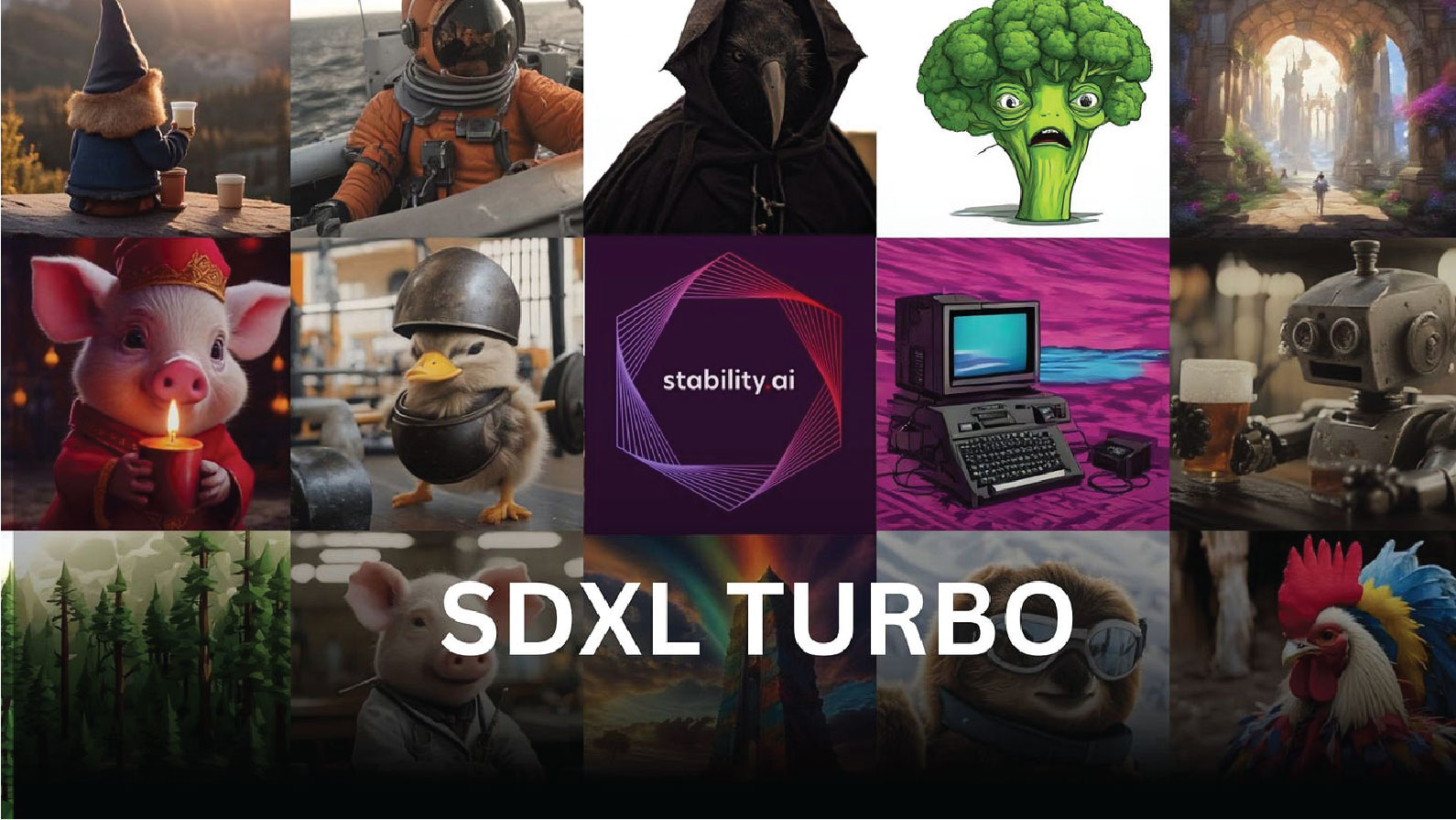

Stability AI introduced Stable Diffusion XL Turbo on Tuesday, an AI image-synthesis model designed to swiftly generate images based on written prompts. The company is promoting it as a “real-time” image generation tool due to its remarkable speed, which enables it to rapidly transform images from sources like webcams.

The standout feature of SDXL Turbo is its capacity to generate image outputs in a single step, a substantial advancement from its predecessor that required 20–50 steps. Stability credits this efficiency leap to a technique named Adversarial Diffusion Distillation (ADD). ADD incorporates score distillation, allowing the model to learn from existing image-synthesis models, and adversarial loss, which enhances the model’s capability to distinguish between real and generated images, ultimately improving the realism of the generated output.

While the images produced by SDXL Turbo may not match the level of detail found in higher step counts of SDXL images, it’s not intended as a replacement for the previous model. However, the speed gains it offers are remarkable. Stability AI introduced

In a practical test, SDXL Turbo was run locally on an Nvidia RTX 3060 using Automatic1111 (with weights dropping in like SDXL weights). It demonstrated the ability to generate a 3-step 1024×1024 image in approximately 4 seconds, as opposed to the 26.4 seconds required for a 20-step SDXL image with comparable detail. Smaller images generated even faster (under one second for 512×768), and a more powerful graphics card, such as an RTX 3090 or 4090, would further accelerate the generation times. Despite Stability’s marketing, it was observed that SDXL Turbo images exhibited optimal detail at around 3–5 steps per image.